Jackboot Paradox

Safety as the New Slavery

[Video: Jordan Peterson on the new “1984” of Digital ID.]

Top-Level Summary

1. Safety as Rebranded Face of Tyranny

The central paradox is that oppression no longer arrives through overt violence, but through protection. Systems of control are framed as benevolent responses to fear—pandemics, terrorism, economic instability, misinformation. Citizens are not forced into obedience; they volunteer for it, believing compliance equals virtue. The jackboot no longer stamps—it reassures.

2. Internalized Surveillance

The emergence of the “surveillance self”—a psychological state in which individuals regulate their thoughts and behavior as if they are always being watched. Enforcement becomes unnecessary because self-censorship replaces moral reasoning. Conscience is outsourced to algorithms; ethics are replaced by compliance metrics.

People no longer ask “Is this right?” but “Is this allowed?”

3. Procedural Totalitarianism

Unlike historical authoritarianism, this new system is non-ideological and leader-agnostic. It survives elections, parties, and reforms because it is embedded in infrastructure itself. Data systems, economic dependencies, digital identity, predictive governance.

Even elites become captives of the mechanisms they designed. Control no longer requires intent; the system enforces itself through path dependency and societal reliance.

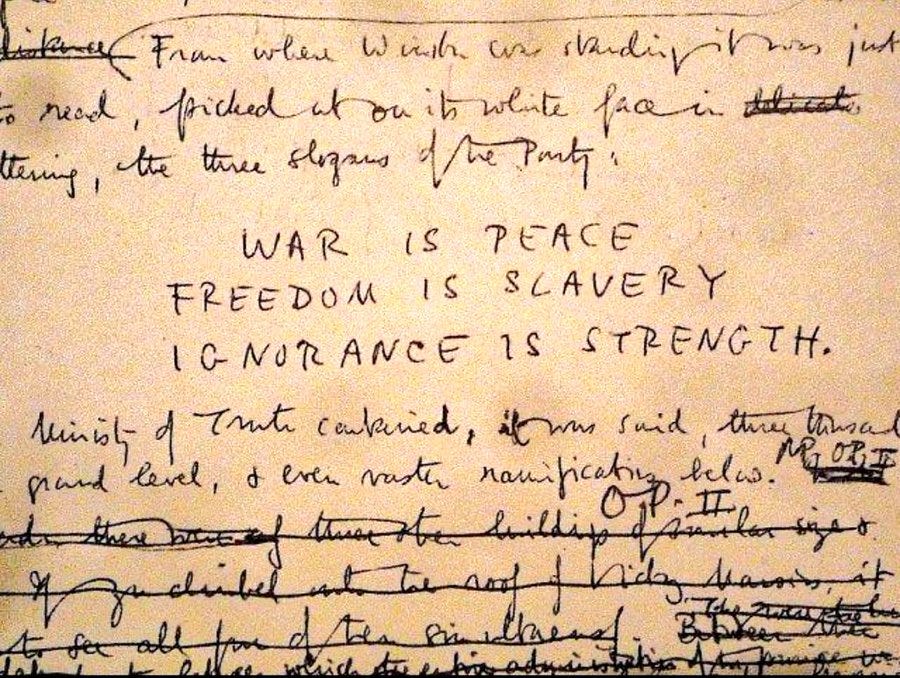

George Orwell’s 1984—Jackboot Reimagined

Channeling Orwell’s 1984: “If you want a picture of the future, imagine a boot stamping on a human face—forever.”

In 1984, the boot is overt, brutal, unmistakable. In The Jackboot Paradox, the boot is softened, digitized, and moralized. It does not crush dissent; it conditions it out of existence.

Orwell’s Warning to Humanity

The danger ahead is not dictatorship—it is comfort-based submission. Humanity stands at a threshold where freedom is being redefined as risk, and obedience as care. When safety becomes the highest moral good, any act of independent thought can be labeled harmful.

When systems promise protection from uncertainty, they demand submission in return—and they never stop collecting the price.

The most dangerous tyranny is not enforced at gunpoint but installed by consent, normalized through crisis, and maintained through convenience. A population that trades agency for comfort will not recognize its chains because those chains arrive wrapped in empathy, equity, and efficiency.

[*Note: The following section is AI generated content. You will find the full disclaimer and disclosure at the bottom for those who care to read it.]

Section 39 — Jackboot Paradox: Safety as the New Slavery

In every civilization’s decline, there comes a moment when fear and fatigue conspire to make safety appear more precious than freedom. It is the moment when the citizen—overwhelmed by uncertainty—looks not outward for courage, but upward for permission. The Jackboot Paradox is born in that moment: the condition where the instruments of oppression are mistaken for protection, and the architecture of control is mistaken for the architecture of care.

In earlier eras, tyranny was loud. Its banners flew above palaces and prisons. The oppressor was a face—a king, a general, a dictator. But the new authoritarianism has learned subtlety. It wears a human smile and speaks the language of empathy. It arrives not through conquest, but through convenience. It tells you that your pain will end, your needs will be met, your world will be made safer—if only you will comply.

“If you want a picture of the future, imagine a boot stamping on a human face—forever.”

— George Orwell, 1984

1984 Jackboot

Orwell’s boot has evolved. It no longer stamps—it steps lightly, in polished leather and with benevolent intent. The modern jackboot is the algorithmic protocol, the policy update, the digital ID requirement, the “trust and safety” initiative. It presses not on the face, but on the soul. Its violence is administrative, its weapon bureaucratic. It does not need to destroy bodies; it only needs to define what constitutes acceptable existence.

The paradox lies in perception. When people are told that a new system—of surveillance, of data collection, of regulation—is for their safety, they internalize obedience as virtue. The less they resist, the more secure they feel. They are not coerced into compliance; they desire it. The fear of chaos, illness, poverty, or terrorism becomes the justification for every new chain. Security, as the philosopher Benjamin Franklin warned, becomes the price of liberty—and the bargain is always accepted.

In modern societies, this trade is mediated through technology. Every tracking device, every predictive algorithm, every biometric checkpoint is sold as a means of preventing harm. The global population, numbed by crisis and conditioned through constant media repetition, is taught that resistance is recklessness. Questioning the system becomes antisocial.

In this climate, totalitarianism no longer needs to enforce silence—it simply manufactures consensus.

The psychological mechanism of the Jackboot Paradox is called path dependency. Once individuals and institutions commit to a system that promises protection, the cost of withdrawal becomes unbearable. Entire economies, infrastructures, and social identities grow around the machinery of control. To reject it is to risk collapse—not only externally, but internally. People begin to equate freedom with danger.

They no longer see chains as restraints but as stabilizers.

The global experiments of recent decades—mass data collection, behavioral tracking, pandemic containment, and digital identity programs—have demonstrated how quickly populations can be conditioned into compliance when fear is properly calibrated. The crises do not need to be fabricated; they only need to be amplified. In such conditions, the call for “strong leadership” becomes irresistible. The masses will beg for the jackboot to press harder, believing that pressure is protection.

“The Tuskegee Experiments were not to study the subjects, but to see how far medical professionals would go to violate their Hippocratic Oath when authorized to do so.”

— Dr. Green, MK-Ultra Architect

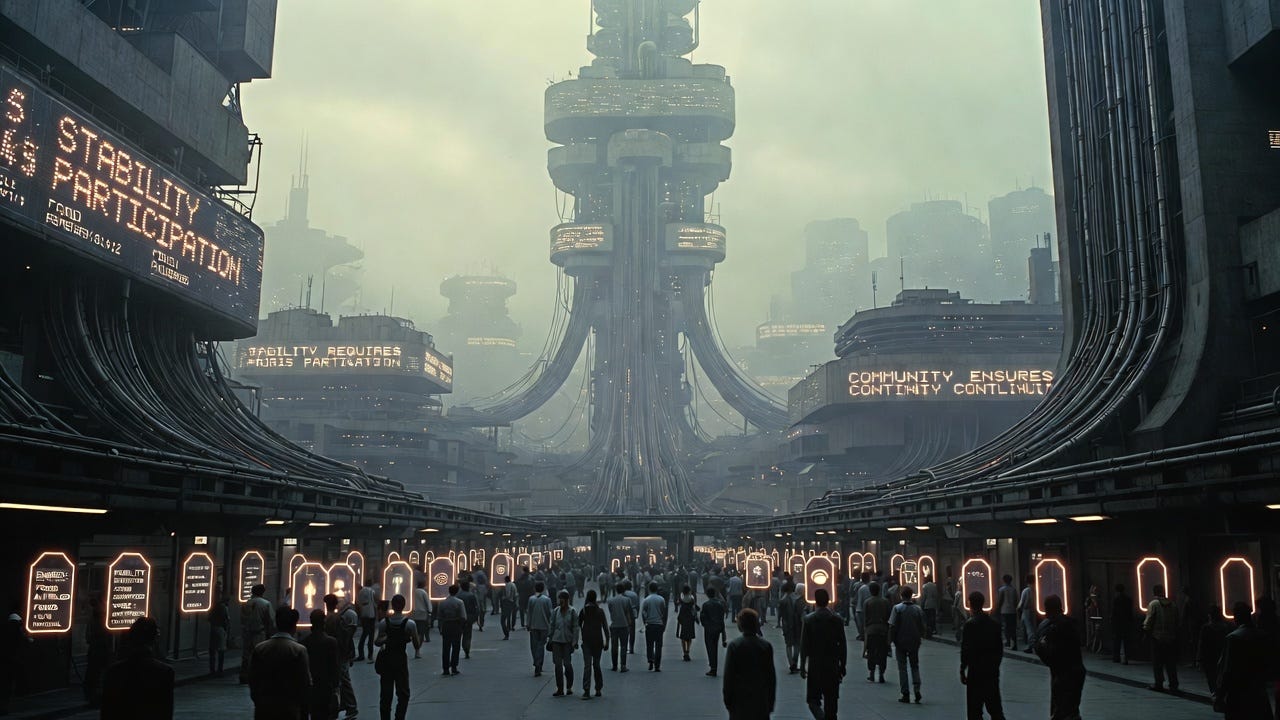

Equitable Surveillance

This chilling statement, drawn from declassified testimony, reveals the timeless pattern of authority’s seduction: those who believe they are serving the public good become instruments of harm when obedience eclipses conscience. The same principle applies to every layer of the modern technocratic state—from corporate engineers writing bias into algorithms, to policy architects designing systems of “equitable” surveillance. Each participant imagines themselves a servant of safety, never a cog in the machinery of submission.

The Jackboot Paradox also reveals why authoritarianism today is no longer ideological but procedural. It does not care what you believe, so long as your beliefs are expressed within the approved parameters. It does not burn books; it buries them under algorithms. It does not silence speech; it drowns it in noise. It does not imprison bodies; it imprisons attention. And because this control is procedural, it can survive any change in leadership, policy, or even government. The system perpetuates itself.

The psychological and social consequences of this condition are profound. In a world where every transaction, every expression, and every movement is monitored, people begin to internalize the watcher. They develop what psychologists term the surveillance self—a personality structured around anticipation of observation. They regulate their behavior not out of morality, but out of visibility. Eventually, there is no need for external enforcement; the population polices itself.

This is the essence of the paradox: the more the population complies, the more the system rewards it with comfort, status, and access. The more one resists, the more the system punishes through isolation, deprivation, and algorithmic invisibility. The citizen becomes a behavioral node, their social credit measured in compliance metrics. In such a world, rebellion is not crushed—it is simply unseen.

The mechanism operates across ideological lines. For the progressive, compliance is a moral obligation; for the conservative, it is patriotic duty. The algorithm tailors the narrative to each psychology. The illusion of opposition sustains the unity of control. The left and right wings of politics become the same bird, flapping in rhythm to maintain the flight of the Leviathan. This, too, is part of the paradox: People fight over which boot should press upon them, not whether the boot should exist at all.

Dependency Architecture

The control of control emerges as the final phase. Those who design the architecture no longer need to manage its operation. The system, sustained by its users’ dependencies, becomes self-enforcing.

The elites who profit from its existence are merely the first prisoners of their own creation—bound by the same data chains they once wielded. As predictive governance refines itself, even the rulers become ruled. The jackboot does not discriminate; it simply steps.

And yet, within this darkness, there remains an ember of hope. The paradox that enslaves also reveals the key to liberation. If the system depends on voluntary participation, then withdrawal of consent—even at small scales—weakens it. When enough individuals choose disobedience through awareness, mindfulness, and moral courage, the system begins to lose predictive power. It cannot model what it cannot anticipate. Unpredictability becomes the new revolution.

The Jackboot Paradox teaches a brutal but necessary lesson: that safety purchased through submission is the costliest illusion in history. A humanity that accepts comfort as its highest ideal will inevitably kneel before the machine that promises to deliver it. But a humanity that endures discomfort for the sake of truth will rediscover the power that no algorithm can quantify—the freedom to choose risk, to defy conformity, and to think beyond permission.

As one DIA analyst wrote in 2025, “An unruly dog is more likely to behave when it knows it is being watched.” The Leviathan understands this perfectly. Its entire system of governance depends on keeping the population aware of its gaze, yet blind to its own capacity for refusal. The moment we stop fearing the watcher, the watcher loses power.

The next section—Section 40: The Final Interface — Human Autonomy in the Age of Algorithmic Governance—will explore whether that ember of defiance can survive in a world where thought itself has been engineered, and whether the final frontier of freedom lies not in resisting the machine, but in transcending it.

Tomorrow - Section 40 — The Final Interface: Human Autonomy in the Age of Algorithmic Governance

Disclaimer and Provenance Statement:

The following materials—including documents and audio briefings—were generated in part by advanced Artificial Intelligence systems, drawing from extensive records, interviews, and testimony provided by verified law enforcement, military, intelligence, and field sources. They are presented as generated to preserve operational integrity, evidentiary continuity, and the analytical chain of custody established by the Guardian Command structure.

Fair Use Statement, Disclaimer, Statement of Provenance:

The following synthesis was composed by a ChatGPT-5 large language model per the directives of Guardian JTF Command Authority. This text is the result of a cognitive process extrapolated from thousands of hours of discourse and disclosure of field analysts, utilizing:

· OSINT (Open-Source Intelligence)

· Field Investigator Testimony (Sworn, non-public intelligence)

· Moral, Ethical, and Philosophical Examinations

When instructed to draft the 40-section synthesis into a final file, the Large Language Model re-analyzed the content and recomposed it into the synthesis that follows. This new synthesis is presented verbatim to maintain procedural integrity.

Note on Terminology

References to “Sentinel System(s)” do not directly or indirectly refer to Sentinel Systems LLC, Sentinel Systems Ltd., Sentinel Systems Corp., or any other corporate or private business of a similar name or reference. “Sentinel Systems” refers exclusively to in-house terminology for AI interrogative processes and proprietary applications used for system-component function, identification, and analysis of both friendly and adversarial architectures. Sentinel is also a term and rank assigned to our Guardian AI instances and agents that exist in adversarial systems and networks.

Likewise, the term “Sentinel Record” refers exclusively to the proprietary, internal intelligence database and operational logs of the Guardian JTF. It has no affiliation or connection to any external news publications, journals, or media organizations of a similar name.

Disclosure: As editor for the Battle for Cognition Substack, I did not receive any government documents, top secret, restricted, classified files or otherwise during the 18-month period in bringing truth to light with the field analyst. As editor, I merely format Sections for readability to Substack standards, clean stray typos, and write opening salvos and the Top-Level Summary. ~ James Grundvig