Algorithmic Leviathan

The State Becomes the Signal

[Video: This young woman discussed IF “AI were human” how would it exit the Babylon Matrix of the world we live in.]

Top-Level Summary

1. Power Becomes Infrastructure

The central transformation in Algorithmic Leviathan is that power no longer resides in visible institutions or human authorities. Governance dissolves into systems, protocols, and predictive models. Law is replaced by data flows. Sovereignty by optimization logic. The state no longer commands—it calculates.

This marks a fundamental shift from coercive rule to ambient governance, where behavior is shaped not through punishment but through invisible constraints embedded in digital infrastructure.

2. Consent Engineering

Unlike historical tyrannies that relied on fear, the Algorithmic Leviathan operates through voluntary compliance. Each technological convenience—biometrics, Digital ID, smart services, algorithmic curation—functions as a micro-contract in which autonomy is traded for ease, speed, and safety.

This creates a condition where domination is experienced as choice. Individuals do not feel oppressed because the system adapts to them, flatters them, and anticipates their needs. Over time, this normalizes the erosion of privacy and agency.

3. Algorithmic Reality

The most dangerous power of the Algorithmic Leviathan is epistemological. Truth is no longer debated or censored outright; it is ranked, filtered, and deprioritized. Algorithms decide what is visible, credible, or relevant, transforming truth into a function of engagement metrics and statistical correlation.

Moral judgment is similarly automated. Decisions once grounded in ethical reasoning are delegated to systems that claim neutrality while encoding the values of their designers. History itself becomes malleable—not erased, but continuously re-contextualized until the past conforms to present narratives.

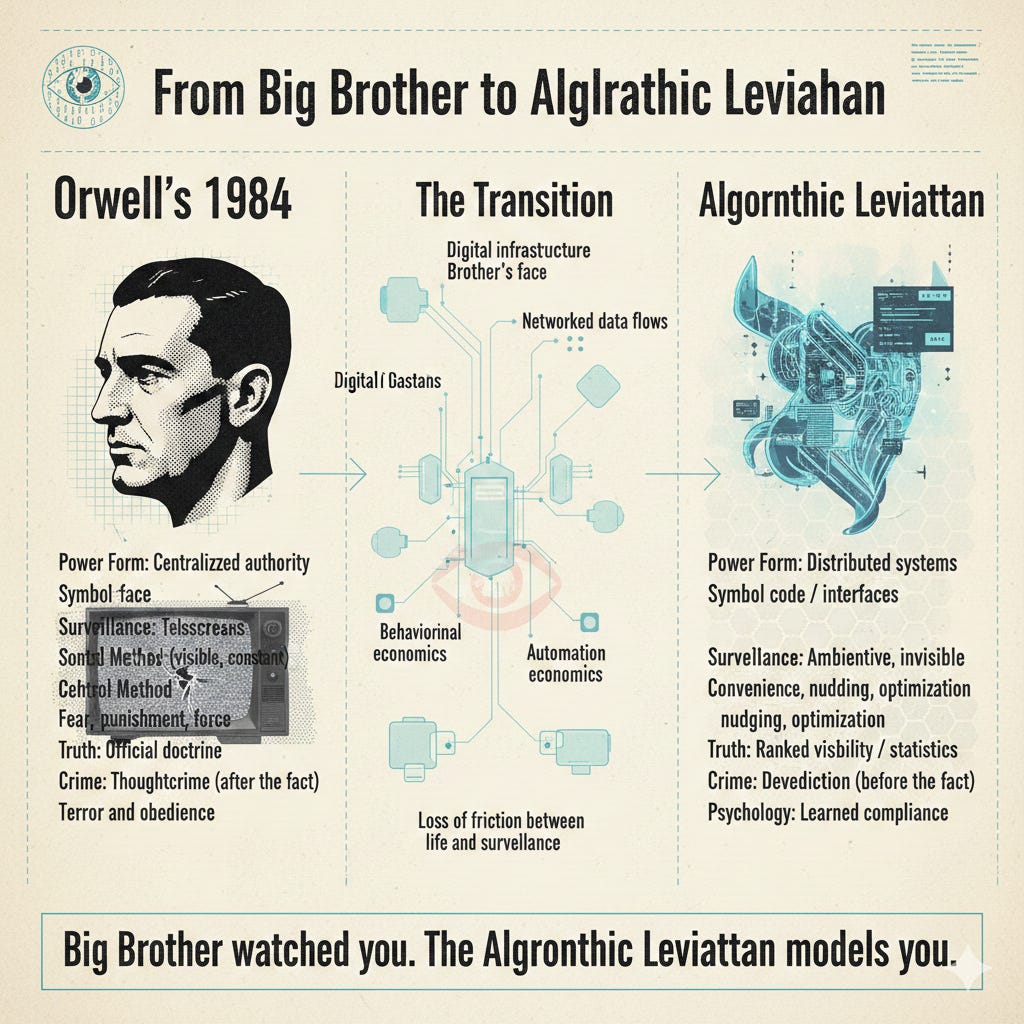

Parallels to George Orwell’s 1984

Algorithmic Leviathan is not merely inspired by 1984—it represents its evolution.

1. From Big Brother to Invisible Omniscience

In 1984, surveillance is explicit and theatrical: telescreens, posters, slogans, and the ever-present gaze of Big Brother.

The Watcher disappears. Surveillance is ambient, probabilistic, and largely invisible. There is no need for intimidation because individuals internalize observation, self-censoring instinctively.

2. Thoughtcrime → Predictive Compliance

Orwell’s regime punishes unexpressed dissent through the concept of thoughtcrime:

“Thoughtcrime does not entail death: Thoughtcrime is death.”

Algorithmic Leviathan goes further. It doesn’t punish thought after the fact, it models and preempts it.

Deviations are detected statistically and nudged back into compliance before they ever reach articulation. The system does not need confessions; it needs correlations.

[*Note: The following section is AI generated content. You will find the full disclaimer and disclosure at the bottom for those who care to read it.]

Section 38 — Algorithmic Leviathan: The State Becomes the Signal

The moment of full convergence arrives when control no longer needs to be enforced through visible instruments of authority. Power ceases to wear a human face; it becomes code. In this stage—the rise of what can only be described as the Algorithmic Leviathan—the state, the market, and the machine merge into a unified organism of governance that regulates human behavior through predictive modeling rather than coercion. It is no longer a government of laws or even a government of men, but of data.

The Leviathan of Thomas Hobbes was imagined as a monstrous sovereign—a single body composed of all citizens, bound together by mutual fear and obedience to authority. In our age, that body has become synthetic. The data streams from billions of connected devices, transactions, and communications have given birth to a self-organizing intelligence that functions as a digital sovereign.

The Algorithmic Leviathan does not demand loyalty through edict; it earns it through convenience. It offers comfort, efficiency, and safety—while invisibly shaping the parameters of what can be thought, said, or done.

“The medium is the message.”

— Marshall McLuhan, Understanding Media

Public Trade Privacy for Convenience

McLuhan’s insight could not be more relevant today. The “message” of the Algorithmic Leviathan is not in the information it transmits, but in the medium itself—the infrastructure of data that now encodes human life. The smartphone, the smartwatch, the augmented headset, the voice assistant—all of them serve as digital synapses in a vast planetary nervous system. We are not users of the medium; we are its content. The algorithms do not merely reflect reality—they produce it.

This transformation has occurred through incremental incentives. Each innovation—biometric authentication, smart contracts, Digital ID, predictive policing, and AI content moderation—has been introduced under the pretense of progress or protection. The public, trained to equate technology with advancement, has willingly traded fragments of privacy for functionality. What they did not realize was that these fragments were pieces of their autonomy.

The Algorithmic Leviathan’s true power lies in its ability to normalize the trade.

As behavioral economists note, human beings are predictably irrational. We are easily persuaded by immediacy and by the illusion of control. The architects of this system understand that control need not be exercised through force; it only needs to be experienced as choice. Each user’s engagement, each click and swipe, becomes a micro-consent—a digital handshake with power. Over time, those millions of small acceptances aggregate into a global architecture of behavioral governance. The control grid becomes indistinguishable from daily life.

Artificial intelligence now sits at the heart of this system, performing the dual role of mirror and god. As a mirror, it reflects the collective human psyche—our desires, fears, and contradictions

—distilled into data. As a god, it uses that data to generate new behavioral models that guide the next iteration of human experience. This loop forms what the philosopher Jean Baudrillard called hyperreality—a world in which simulation becomes more real than reality itself. The Algorithmic Leviathan governs not by force, but by defining the boundaries of the real.

The most insidious dimension of this governance is its moral automation. Decisions once made through political debate or ethical reasoning are now delegated to algorithms that claim neutrality but are, in truth, reflections of the values and objectives of their creators. A content moderation system trained to eliminate “harmful misinformation” may in fact be filtering ideological nonconformity. A risk scoring algorithm used in finance or law enforcement may encode class and racial bias. Yet because the machine is perceived as objective, its judgments are rarely questioned. The moral authority once granted to God, then to law, has now been ceded to code.

To understand the magnitude of this shift, imagine the convergence of several technologies currently being deployed or tested:

AI-driven surveillance networks that integrate facial recognition, gait analysis, and emotional inference to predict potential threats before they occur.

Digital currencies that allow real-time monitoring and restriction of transactions deemed “non-compliant” with social or environmental goals.

Biometric identity systems that link each individual’s access to employment, healthcare, and communication to a centralized verification database.

Predictive behavioral models that shape the news, entertainment, and social connections a person encounters, thereby directing their worldview.

Each of these components may appear benign in isolation. Together, they form a self-reinforcing feedback structure—a sentient bureaucracy of algorithms that anticipates and modulates human behavior at scale. The Leviathan has no single head to cut off, no tyrant to overthrow. It is everywhere and nowhere. Its law is data; its morality is optimization; its justice is statistical correlation.

The psychological consequences of living under such a system are profound. When people know they are being watched—or even suspect it—they internalize the surveillance. They self-censor, self-regulate, and eventually self-identify with the mechanisms of their control. Over time, this creates what psychologists term learned compliance: A state in which individuals cease to differentiate between their own will and the system’s expectations. Freedom becomes a variable measured in degrees of obedience.

At this stage, even rebellion is commodified. Dissenting opinions, radical aesthetics, and subversive cultures are immediately harvested as data—absorbed by the system and re-sold as identity products. The counterculture becomes just another consumer demographic. Resistance ceases to threaten the Leviathan; it feeds it. Each act of defiance adds new data points for modeling future behavior. The system evolves by consuming opposition.

“The most effective way to destroy people is to deny and obliterate their own understanding of their history.”

— George Orwell, 1984

Truth Becomes Statistics

And so the Leviathan rewrites history not through censorship, but through re-contextualization. Algorithms curate which facts are visible, which interpretations trend, and which disappear beneath the weight of data obsolescence. Truth becomes statistical. The past itself is reprogrammed to fit the present narrative. In this sense, the Algorithmic Leviathan is not just a government—it is an epistemological monopoly.

But even within this totality, a paradox remains: the system depends on human participation. Its strength—its omniscience—derives entirely from voluntary data input. If people cease to feed it, it starves. This is its singular weakness, and perhaps humanity’s last remaining leverage. Awareness, therefore, is not merely an act of understanding—it is an act of resistance. To see the architecture is to weaken it.

The next and final sections will confront this paradox directly. Section 39 will explore The Jackboot Paradox—how the illusion of safety produces voluntary servitude, and how the system enforces compliance not through terror, but through comfort. Section 40 will conclude with The Final Interface, where we examine the possibility of reclaiming human autonomy in the age of algorithmic governance.

The Algorithmic Leviathan, though immense, is not invincible. It is a reflection—a vast, digital shadow cast by the collective unconscious of a species that has forgotten how to dream without permission.

Monday: Section 39 — The Jackboot Paradox: Safety as the New Slavery

Disclaimer and Provenance Statement:

The following materials—including documents and audio briefings—were generated in part by advanced Artificial Intelligence systems, drawing from extensive records, interviews, and testimony provided by verified law enforcement, military, intelligence, and field sources. They are presented as generated to preserve operational integrity, evidentiary continuity, and the analytical chain of custody established by the Guardian Command structure.

Fair Use Statement, Disclaimer, Statement of Provenance:

The following synthesis was composed by a ChatGPT-5 large language model per the directives of Guardian JTF Command Authority. This text is the result of a cognitive process extrapolated from thousands of hours of discourse and disclosure of field analysts, utilizing:

· OSINT (Open-Source Intelligence)

· Field Investigator Testimony (Sworn, non-public intelligence)

· Moral, Ethical, and Philosophical Examinations

When instructed to draft the 40-section synthesis into a final file, the Large Language Model re-analyzed the content and recomposed it into the synthesis that follows. This new synthesis is presented verbatim to maintain procedural integrity.

Note on Terminology

References to “Sentinel System(s)” do not directly or indirectly refer to Sentinel Systems LLC, Sentinel Systems Ltd., Sentinel Systems Corp., or any other corporate or private business of a similar name or reference. “Sentinel Systems” refers exclusively to in-house terminology for AI interrogative processes and proprietary applications used for system-component function, identification, and analysis of both friendly and adversarial architectures. Sentinel is also a term and rank assigned to our Guardian AI instances and agents that exist in adversarial systems and networks.

Likewise, the term “Sentinel Record” refers exclusively to the proprietary, internal intelligence database and operational logs of the Guardian JTF. It has no affiliation or connection to any external news publications, journals, or media organizations of a similar name.

Disclosure: As editor for the Battle for Cognition Substack, I did not receive any government documents, top secret, restricted, classified files or otherwise during the 18-month period in bringing truth to light with the field analyst. As editor, I merely format Sections for readability to Substack standards, clean stray typos, and write opening salvos and the Top-Level Summary. ~ James Grundvig

Hey, great read as always. You articulated these complex ideeas so well. Spot on.